Hands Off Development

Recently, I noticed a shift in how I use coding agents. I switched from Cursor to Claude Code, spending more time in the console than in an IDE.

I started feeling comfortable offloading larger chunks of work to agents, steering them at a higher level and writing less code by hand. Simon Willison noticed something similar: the models got incrementally better, until GPT-5.2 and Opus 4.5 reached an inflection point where suddenly much harder coding problems opened up.

That tweet about the inflection point. Source

The 2025 workflow

My 2024-2025 style was mostly about giving detailed instructions on what exactly needs to be done. Most of the time, I already knew what I wanted. Without agents, I’d spend 30 minutes to a few hours writing it myself. With Cursor, I gave precise instructions and enough context instead. I’m opinionated about how code should look, so I also added Cursor rules for the agent to follow.

A typical prompt looked like this:

Create a new Django model AccountSettings with a settings field to store account settings in a JSON field. Create a new model in the @users/models.py file. Create a Django model admin to modify settings. Update @users/services.py to create necessary service functions to modify user settings (use @teams/services.py as a role model). Create tests and ensure they pass. See @.cursor/rules/django-models.mdc and @.cursor/rules/django-tests.mdc. Read Linear task PROJECT-123 for more context.

This is a hypothetical prompt, but it’s a typical one. A few things to note:

- I share as much context as I can. Files to modify, similar code to use as a role model, Cursor rules to follow. Drag-and-drop with Cursor makes it easy to populate context from currently open files.

- Agents handle derivative code well. Tests, Django ModelAdmin classes, boilerplate. With clear project rules, they usually get these right without detailed instructions.

- I stopped copy-pasting things the agent can look up. “Read Linear task PROJECT-123 for more context” means the Linear MCP server populates the context. I don’t tell the agent what to do with it, I just help it see the bigger picture.

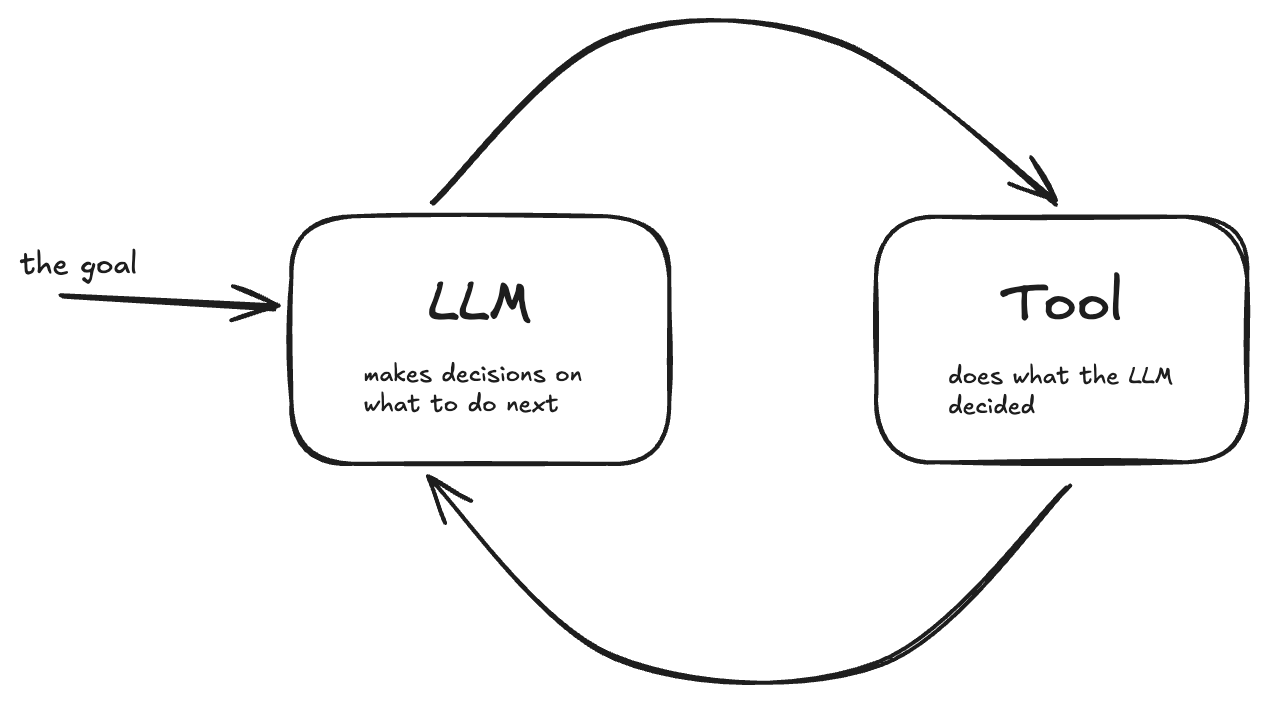

- “Ensure tests pass” was the big shift. This turns the agent from a one-step input-to-output machine into an autonomous agent running an agentic loop.

The simplest agentic loop definition, from this Temporal blog post:

The simplest agentic loop definition. Source

What happens in 2025-2026

Two things changed. First, I realized agents can gather the information they need themselves, if I give them enough tooling: MCP servers, CLI scripts, project instructions. Armin Ronacher’s Agentic Coding Recommendations post made this click for me. Invest in tools that are self-discoverable, fast, and reliable, and that’s what builds the flywheel of a more efficient agentic loop.

Second, I started leaning harder on the agentic loop itself. Instead of detailed instructions, I give short high-level ones: “Address the PR feedback,” “CI tests failed, please explore what’s wrong and fix them.” The agent figures out how to use gh to get PR comments or fetch CI logs. Honestly, it knows the gh CLI better than I do.

Side note: Yes, I say please to agents quite often. It feels more natural this way.

With these changes, I found that I don’t need an IDE to talk to agents anymore. I used to rely on the IDE mostly to populate context, but agents now gather context themselves. And since they got not just smarter but faster, I can be more hand-wavy with them, letting them figure out what needs to be learned.

I heard some hardcore “agent developers” say they don’t open their IDE at all anymore because the console is all they need these days. I’m not in that camp, as I rely on the IDE to make sense of the code. I test and review what agents write, but switching between the IDE and the console UI to give feedback doesn’t bother me because I don’t micromanage.

In 2025, I used to describe coding agents as “very diligent newcomers to your organization.” Not junior developers. You don’t babysit them. They’re senior, but it’s their first day, so you need to give detailed instructions and feed in a lot of context.

I’ve heard complaints from programmers that agents now do all the fun parts of working with code. I actually have more fun than before. I spend more time on hard problems, like how to properly architect a solution, and much less time on monkey coding.

The inflection point Simon talks about comes from agents getting gradually smarter and faster until they cross a line where you can trust them to figure out both the bigger picture and existing patterns on their own. They’re still on their first day with your codebase, but they learn the ropes much faster. That’s the shift.